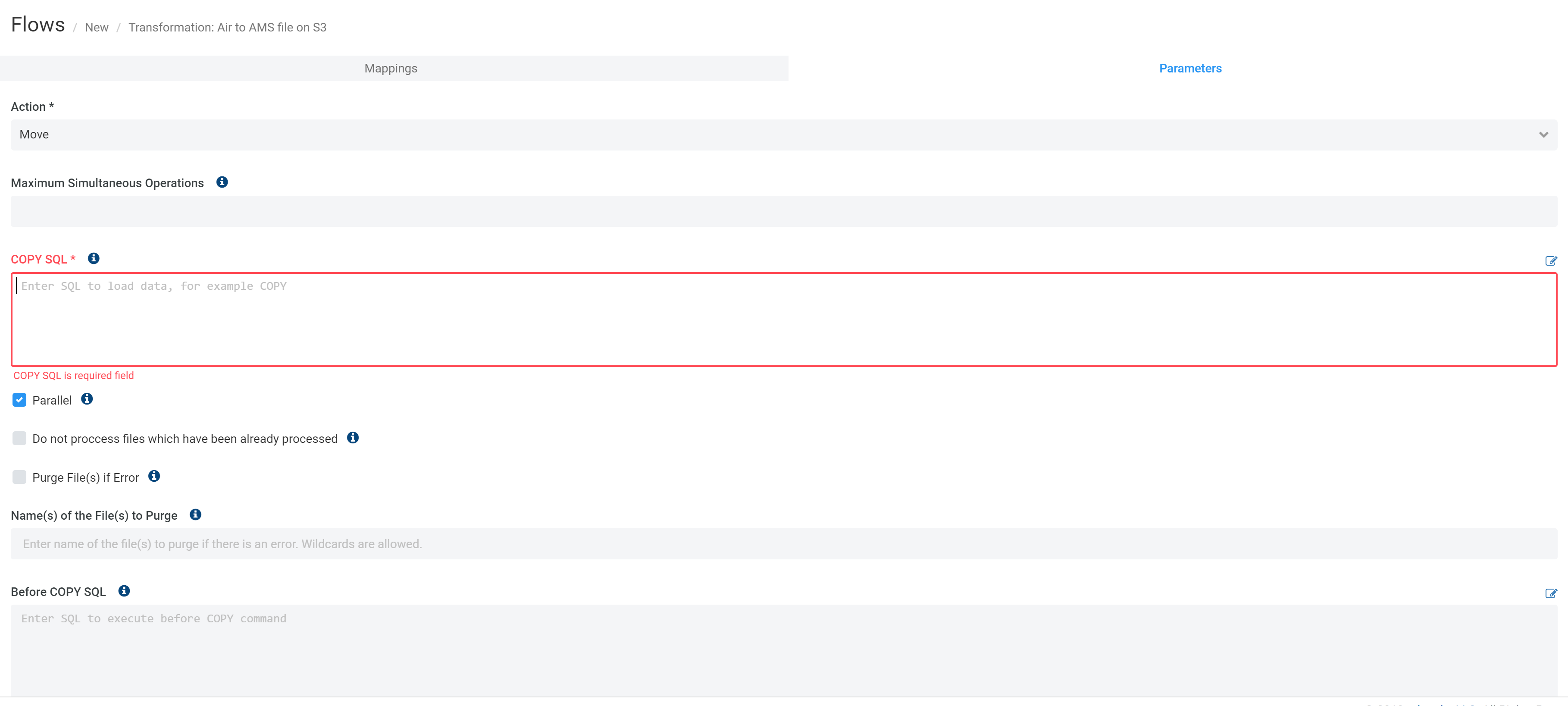

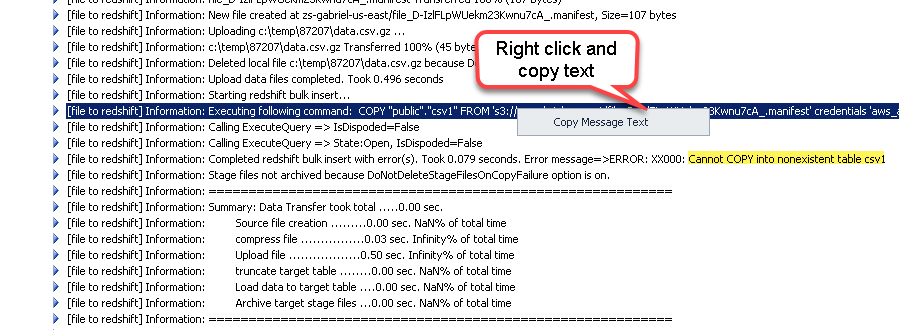

Start creating Redshift Flows by opening the Flows window, clicking +, and typing redshift into the Search field:Ĭontinue by selecting the Flow type, adding source-to-destination transformations, and entering the transformation parameters:įor all Redshift Flows, the final destination is redshift. If you are using a CSV Format for loading large datasets into the Redshift, consider configuring a Format to split the document into smaller files: Redshift can load files in parallel, also transferring smaller files over the network can be faster. When configuring the CSV Format, it is recommended to set the Value for null field to \N, so the Redshift COPY command can differentiate between an empty string and NULL value. Redshift can load data from CSV, JSON, Avro, and other data exchange Formats, but Etlworks Integrator only supports loading from CSV, so you will need to create a CSV Format. When configuring a Connection for Amazon S3, which will be used as a stage for the Redshift Flows, it is recommended that you enable GZip archiving. You will need a source Connection, an Amazon S3 connection used as a stage for the file to load, and a Redshift Connection. Here's how you can extract, transform, and load data in Amazon Redshift: Step 1. The Amazon S3 bucket is created, and Redshift is able to access the bucket.The Redshift user has INSERT privilege for the table(s).The Redshift is up and running and available from the Internet.Cleans up the remaining files, if needed.Dynamically generates and executes the Redshift COPY command.Checks to see if the destination Redshift table exists, and if it does not - creates the table using metadata from the source.Compresses files using the gzip algorithm.Using Redshift-optimized Flows, you can extract data from any supported sources and load it directly into Redshift.Ī typical Redshift Flow performs the following operations: Unlike Bulk load files in S3 into Redshift, this flow does not support automatic MERGE. This flow requires providing the user-defined COPY command. When you need to bulk-load data from the file-based or cloud storage, API, or NoSQL database into Redshift without applying any transformations. When you need to stream messages from the message queue which supports streaming into Redshift in real-time. When you need to stream updates from the database which supports Change Data Capture (CDC) into Redshift in real-time. The flow automatically generates the COPY command and MERGEs data into the destination. When you need to bulk-load files that already exist in S3 without applying any transformations. When you need to extract data from any source, transform it and load it into Redshift. It is recommended that you use Redshift-optimized Flow to ETL data in Redshift. It is, however, important to understand that inserting data into Redshift row by row can be painfully slow.

Therefore, you can use the same techniques you would normally use to work with relational databases in Etlworks Integrator.

Redshift is a column-based relational database.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed